GSA SER Verified Lists Vs Scraping

The Core Difference Between Verification and Raw Harvesting

Every marketer building links with automation eventually faces the same tactical question: should you buy a pre-screened database or scrape your own targets from the web? The keyword "GSA SER verified lists vs scraping" captures this exact crossroads. Understanding the structural difference between these two approaches determines not just your success rate but the overall health of your campaign footprint.

Scraping is the process of extracting URLs from search engines, footprint strings, comment fields, and public dumps in real time. A raw scrape contains everything that matched your query, including duplicate domains, sites that no longer resolve, heavily spammed platforms, and URLs behind login walls. In contrast, a verified list for GSA Search Engine Ranker is a curated asset where every entry has already passed platform compatibility checks and a basic submission test. The gap between a wild harvest and a verified set is where your time and hardware get consumed.

Data Quality and Platform Identification

When you rely on scraping, you are working with unclassified data. Your engine needs to identify the platform type, guess the registration form, and handle captcha triggers purely from the HTTP response. This leads to a high volume of failed submissions even if the URL is technically alive. Many scraped targets are identical engines hosted under different skins, causing GSA SER to waste threads on false variety. A verified list separates Signal from noise by tagging the exact script identity and the form handler ahead of time, giving the engine a precise instruction set before the first POST request fires.

How Raw Scrapes Inflate CPU Waste

A scrape file pulled from targeted queries might yield fifty thousand lines, but aggressive deduplication often reveals that only fifteen percent represent unique platforms suitable for your license. The rest are parked pages, error responses, internal search results, or redirect chains that burn retries. Verified lists eliminate this initial filtration stage entirely. The supplier has already removed 404s, 500s, redirect loops, and domains under registry hold, leaving only URLs that returned a usable registration page under the processor’s last pass.

Speed Through the Pipeline

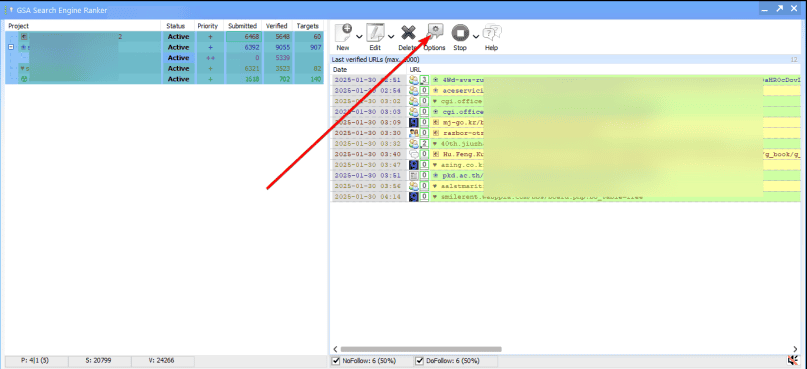

In a GSA SER environment, time-to-link is governed by thread efficiency. Raw scrapes force the software into discovery mode: it must probe for engine footprints before it can attempt registration. This probing generates extra HTTP requests, triggers rate-limiting firewalls faster, and fills the log with noise that obscures real errors. With verified lists, the engine can jump directly to the registration step because the platform template is known. This can triple the number of successful completions in the same submission window, especially when private proxies are the bottleneck resource.

The Registration Form Trap

Scraped links often point to front pages, tag archives, or individual articles rather than the signup path. GSA SER then burns attempts trying to find a form that doesn't exist on that exact URL. A verified list hands the engine the precise registration path, whether it's a phpBB ucp entry point, a WordPress wp-signup handler, or a Drupal user registration route. Those curated paths reduce “form not found†errors to near zero, which is one of the most important efficiency arguments in the "GSA SER verified lists vs scraping" debate for anyone running at scale.

Niche Relevance and Contextual Drift

Scraping engineers often focus on footprint volume and forget relevance gravity. When you scrape for high-level footprints like "powered by wordpress" without additional filters, your target pool dilutes hopelessly. Verified lists from niche-focused vendors are pre-segmented by category, language, and often engine version, letting your content land on sites where the topical neighborhood strengthens the link rather than flagging it. Scraping can attempt the same filtering through search operators, but it requires constant maintenance as search engine result pages shift their output patterns.

Maintaining Adversarial Resilience

A raw scrape taken today will be obsolete within weeks because anti-spam updates close platform registrations silently. Verified list providers re-run submission tests on a schedule and remove URLs that switched to invite-only, added a payment wall, or changed their platform engine. If you scrape only once and feed it into GSA SER for a month, the second half of that campaign is digging through dead soil. The "verified" attribute means the list carries a freshness stamp that scraping doesn't possess unless you continuously re-harvest and validate inside your own pipeline.

Log Hygiene and Error Interpretation

One underrated cost of scraping is the mental load of reading failure logs. When you import fifty thousand raw URLs, your server log explodes with varied HTTP status codes, DNS timeouts, and script detection misses. Spotting a genuine proxy failure or a platform ban becomes much harder in the noise. Verified lists deliver a cleaner signal because every URL is assumed alive at import; any failure then points directly to a proxy issue, a blacklisted IP range, or a content mismatch that you can address surgically.

Hybrid Strategies That Combine Both Worlds

The keyword "GSA SER verified lists vs scraping" often suggests a binary choice, but advanced users layer the two. They acquire a core verified list to seed the campaign with stable targets that build a base link velocity. Simultaneously, they run a scrape-and-detect module that feeds fresh candidates into a custom evaluation queue. The engine tests those candidates against known platform fingerprints, and surviving URLs get promoted into the next cycle's "home-verified" set. This hybrid lowers dependency on external suppliers while keeping the initial success rate high enough to justify the proxy spend.

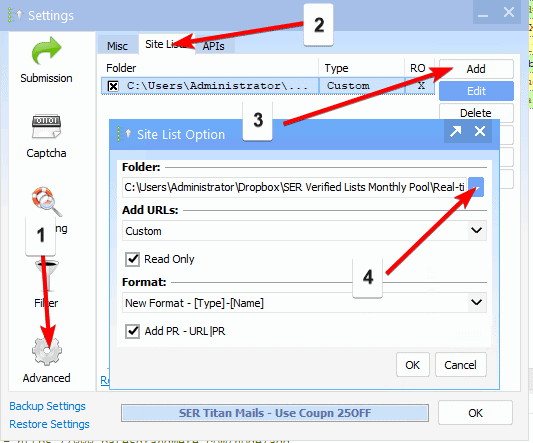

Building a Self-Verification Pipeline

If you commit to scraping, the missing piece is an internal verification stage. After harvesting, you must run candidate URLs through a lightweight headless browser script that confirms the registration form exists and the HTTP response code is 200. Only then should the URL enter GSA SER's input file. Without that gate, you are simply hoping that raw scrapes behave like verified lists, and they rarely do under production load. Self-verification partially erases the advantage of pre-made lists but requires scripting skills and steady maintenance that many operators prefer to offload.

Economic and Time Trade-Offs

Scraping seems free because you are not paying a vendor, but the hidden cost sits in proxy bandwidth, electricity, and the lower return per thread-hour. Verified lists come with a purchase price, yet they compress the time from launch to a stable link graph. If your monetization depends on reaching indexing thresholds before a time-sensitive ranking window closes, the premium for a verified asset often pays for itself in the first two weeks of reduced trial-and-error. The trade-off flips when you have excess local resources and zero budget, making scraping the only viable path.

Security and Footprint Containment

Scraped URLs sometimes include honeypot domains or systems that log unverified probing aggressively. When GSA SER touches those, your IP ranges risk burning faster than with a vetted list where known toxic hosts have been removed. Verified list curators typically blacklist law enforcement trap domains, fake signups that never issue links, and hosts that immediately report spam to blocklist aggregators. Scraping pipelines rarely have that defensive intelligence layer unless the operator actively maintains an exclusion database.

Long-Term Campaign Stability

At the center of "GSA SER verified lists vs scraping" is the question of sustainability. A verified list gives you a controlled, measurable launch pad that helps you calibrate your proxy rotation and content insertion correctly. Once that baseline is established, incremental scraping can be introduced without destabilizing the project. Jumping straight into full-scale scraping without a verified foundation often leads to chaotic logs, blacklisted IPs, and a temptation to over-rotate settings because the true problem is bad targets rather than software configuration. Choose your entry point based on whether you value momentum or raw independence more, and remember that the best campaigns often blend the precision of verification with the freshness of targeted harvesting.

affordable GSA SER verified lists